Feature stores are becoming ubiquitous in real-time model serving systems, however there has been limited work in understanding how features should be maintained over changing data. In this talk, we present ongoing research at the RISELab on …

Are Transformers Becoming the Most Impactful Technology of the Decade?

In this presentation, Clément will provide insights into the revolution taking place in the open-source community with machine learning. From the CEO who is on a mission to create the “Github of Machine Learning,” learn how the best-in-class …

Is Production RL at a tipping point?

Reinforcement Learning has historically not been as widely adopted in production as other learning approaches (particularly supervised learning), despite being capable of addressing a broader set of problems. But we are now seeing an exponential …

Machine Learning Platform for Online Prediction and Continual Learning

This talk breaks down stage-by-stage requirements and challenges for online prediction and fully automated, on-demand continual learning. We’ll also discuss key design decisions a company might face when building or adopting a machine learning …

Data Transfer Challenges In Evaluating MLOps Platforms

Customers evaluating MLOps platforms as a service need to provide customer data during the evaluation phase. The data often needs to be moved to the MLOps companies’ warehouses. This is not a simple task and can become costly if the two partners are …

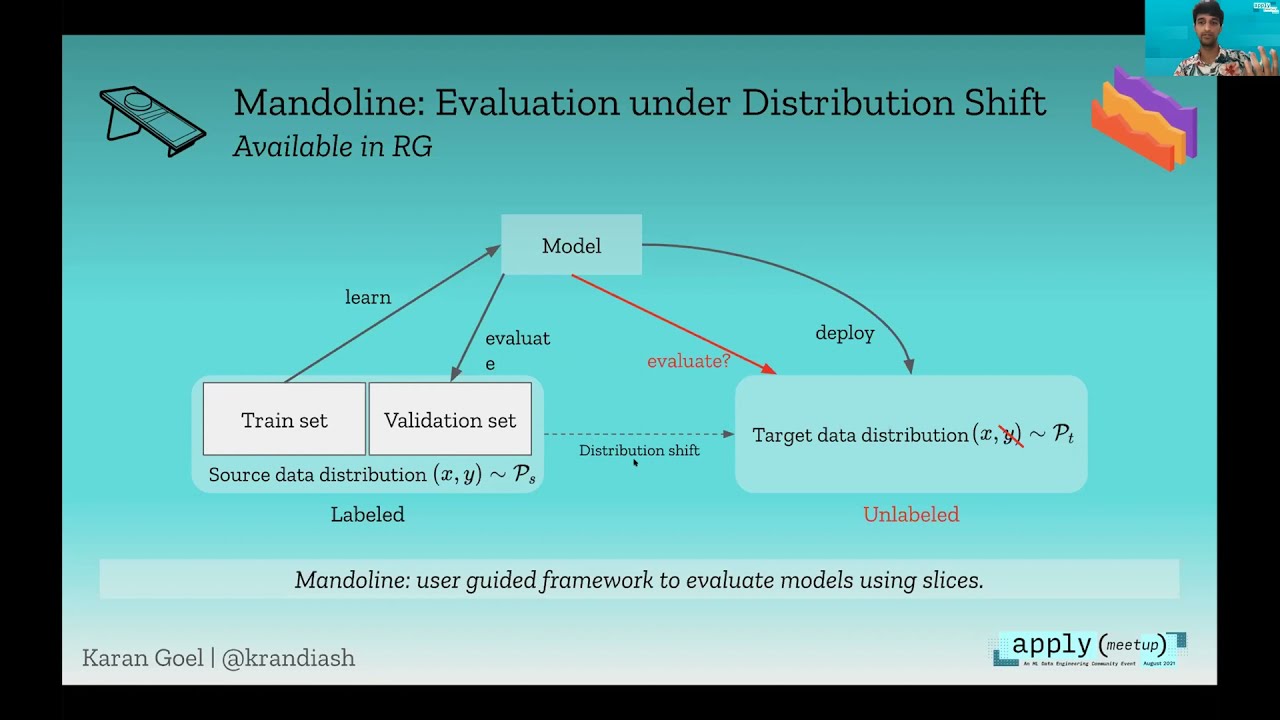

Building Malleable ML Systems through Measurement, Monitoring & Maintenance

Machine learning systems are now easier to build than ever, but they still don’t perform as well as we would hope on real applications. I’ll explore a simple idea in this talk: if ML systems were more malleable and could be maintained like …