Test and Validate Feature Quality with Tecton

Tecton is used to power features in production machine learning. These production environments enable organizations to perform over a million predictions every day, and the quality of features at this scale is of the utmost importance. In this article, we are going to look at some of the ways in which Tecton ensures feature quality in development and production environments.

Testing in development

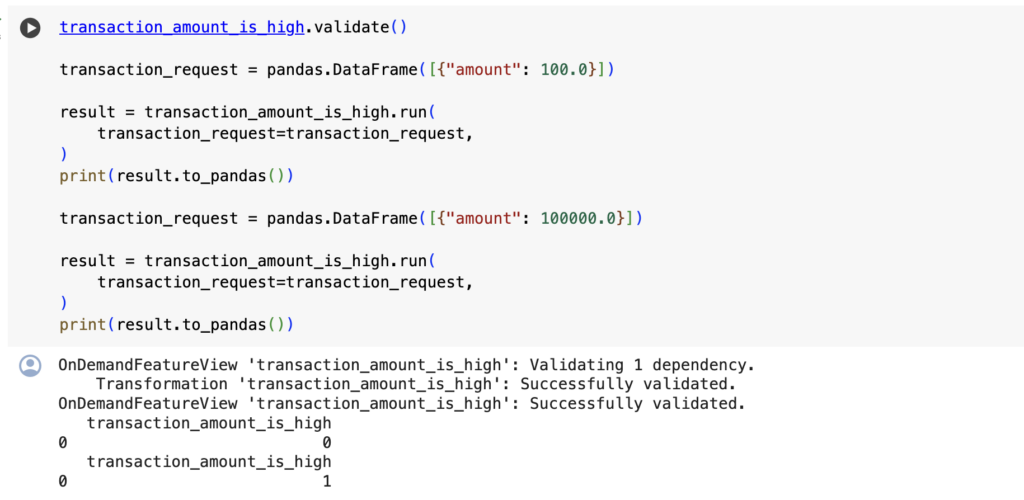

In Tecton, features are developed in a notebook and then productionized as code within a Tecton feature repository. This development environment allows for interactive testing, and you can check the output of a Tecton Feature View or Feature Service by calling the run() command. run() allows you to immediately validate the output of feature transformation logic before it is materialized to an offline or online store or debug a materialization job after it has occurred.

Check out our notebook, Tecton Testing, on Google Colab to see run() and feature variants for yourself!

Feature variants can be helpful when developing Feature Views. These variants create branches in a feature pipeline that can be used to test out new or altered features before checking them into production.

Testing in production

Once features have been sufficiently tested, they can be promoted to production and maintained in a CI/CD environment. When powering machine learning applications in production, it is important not only to test features but to validate and monitor their quality as well. Tecton’s UI provides a number of advanced capabilities to ensure models in production and always receiving the best and freshest features possible.

Visualizing Data Quality

Data Quality Validations help ensure the Feature Views you have built in Tecton are generating features as expected. The Data Quality section of Tecton’s UI will perform 3 validations per Feature View per day to ensure that new rows are being added, to ensure that feature has non-null values, and to ensure that the feature has non-empty values. Drilling into any of these validations will take you directly to the Feature View’s Data Quality Metrics page that provides a deeper understanding of the percentage of rows that contain null or zero values, and how that percentage compares to a previous time period, such as last week.

Unit tests

In both development and production environments, unit tests are critical to guarantee that features are being produced in the proper format. For Tecton features, a unit test will accept mock data source inputs and assert that a Feature View’s transformation logic produces the expected result. When setting up Tecton, tecton init defines a feature repository to create and manage Tecton features. Unit tests can then be added to this directory in a tests/ directory. Running tecton test from a feature repository will run every test in your repository, and tecton apply will apply the updates made to a feature repository only if all the unit tests pass.

We’ve created a sample notebook to build and run unit tests on Tecton features.

Testing and validating with Tecton with Rift

Whether developing something new or ensuring the quality of existing features in production, Tecton provides a number of ways to test and validate features. Computing these features and running tests on them is easier than ever with Rift, Tecton’s new, Python based, AI-optimized compute engine. For more information on Rift, please see our announcement.